Industrial AI without the MLOps headache. Train on your data, deploy to the edge or cloud with one click. No Kubernetes. No data science degree required.

ONNX is the open standard for ML model interchange. Train in PyTorch, TensorFlow, or scikit-learn — export once — run anywhere: edge CPU, GPU, NPU, or cloud.

Framework-agnostic. Switch from PyTorch to ONNX Runtime without rewriting inference code.

ONNX Runtime applies hardware-specific graph optimizations. Faster inference, lower latency at the edge.

Runs on ARM CPUs, Intel NPUs, Nvidia GPUs — even a Raspberry Pi. No cloud dependency for inference.

Apache 2.0 licensed. Supported by Microsoft, Meta, Google, AWS. No vendor lock-in.

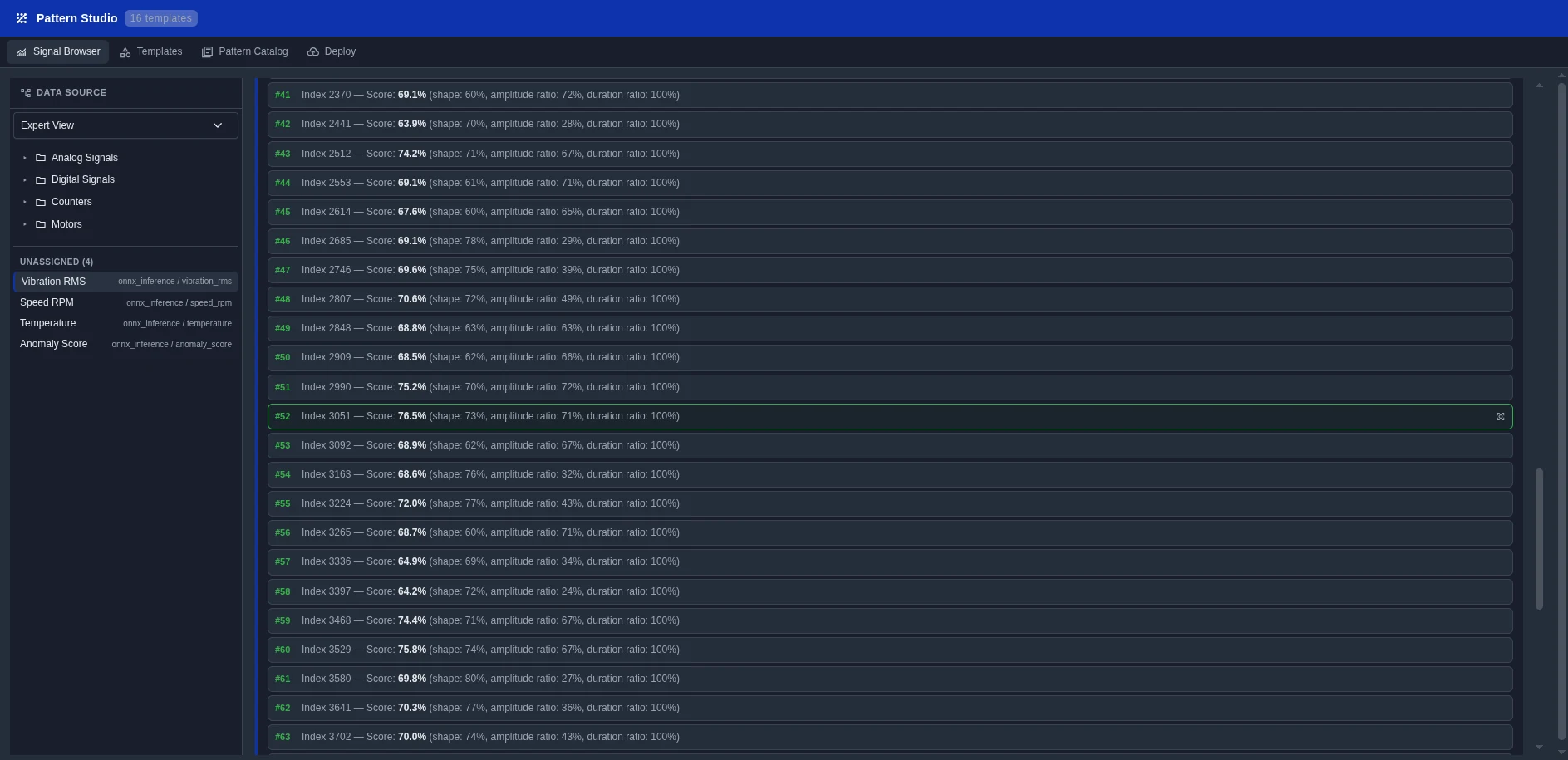

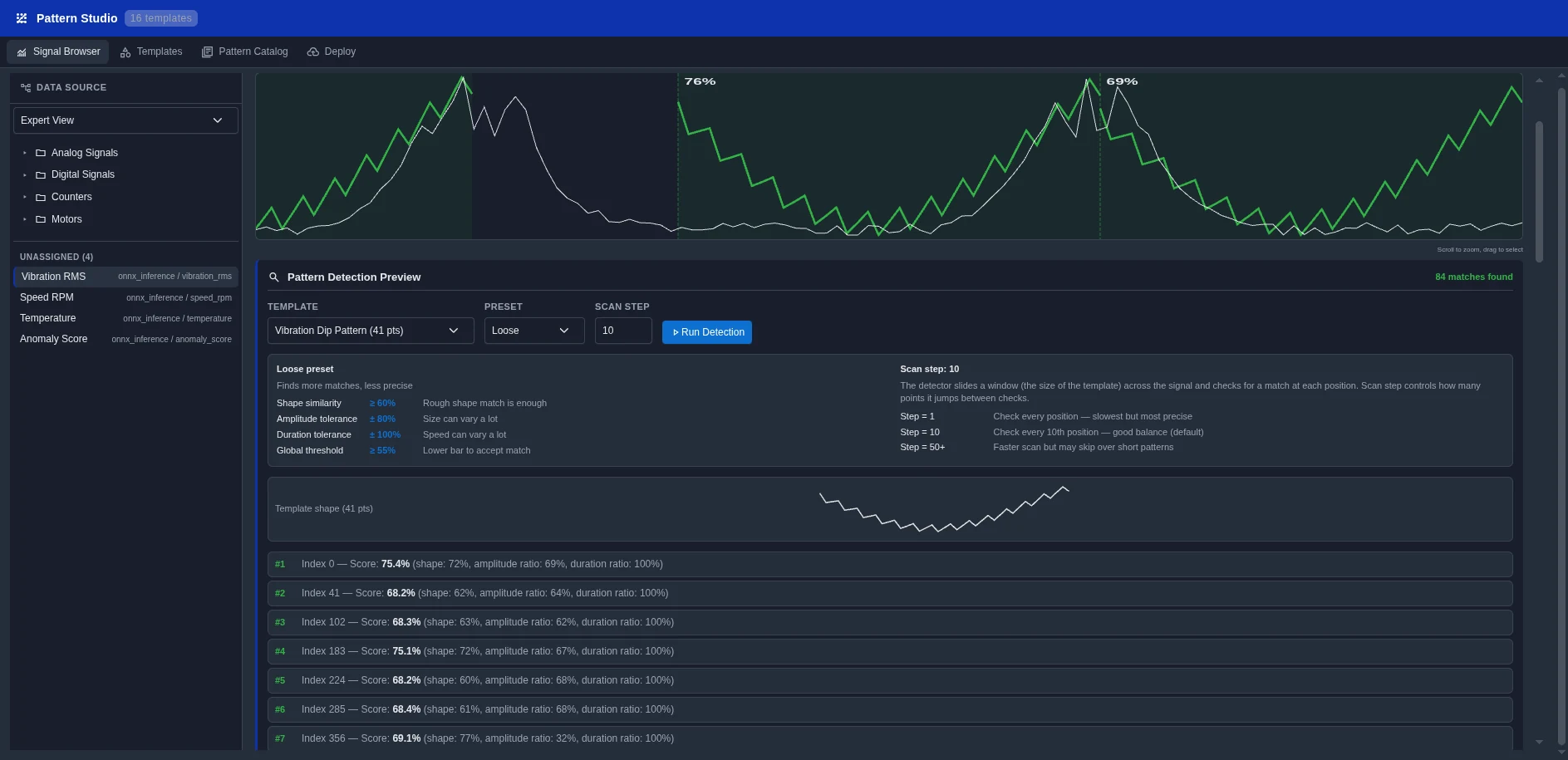

A library of pre-built prediction patterns, ready to plug into your data pipeline.

Drag-and-drop model deployment. Pick a pattern, supply training data, click Deploy. No Kubernetes or MLOps knowledge required.

Each pattern is a proven ML pipeline, pre-configured for industrial data. Pick one, provide your data, deploy.

Google TimesFM pre-trained on 100B+ data points. Zero-shot forecasting on any tag — no training, no tuning. Detect excursions minutes, hours, or days ahead.

Unsupervised auto-encoder learns normal behavior and raises alerts when signals deviate. Works from day one, no labeled failures needed.

Regression model trained on your equipment's failure history predicts time-to-failure in hours, days, or cycles.

Estimate unmeasured variables (chemistry, quality, efficiency) from correlated sensor data. Replace costly lab measurements.

Score each production run against a reference 'golden' trajectory. Catch deviations early before they become defects.

Multi-variable optimiser suggests setpoint changes to minimise energy, maximise yield, or hit target quality.

ONNX-based vision models for quality inspection, safety monitoring, and process visualisation — tuyere cameras included.

Industream integrates with the Model Context Protocol (MCP) so any LLM can query live plant data, run calculations, and trigger actions through typed tools.

"What's the temperature trend on furnace 3 over the last 8 hours?" — The LLM queries DataBridge via MCP and answers in natural language.

LLM agent monitors anomaly scores and drafts a maintenance ticket with root-cause context, ready for human review.

Trigger FlowMaker pipelines via MCP tools. The agent decides when and what to run based on live conditions.

Industream exposes plant data + actions as MCP tools. Any MCP-compatible LLM can connect — frontier or fully sovereign, cloud or on-prem, behind your firewall. Your data never leaves your perimeter unless you want it to.

Not a bolt-on. AI Engine is the optional compute branch between FlowMaker and DataLake. Disable it when you only need raw storage — enable it when you're ready to predict.

AI/ML Workers (highlighted) is the optional compute branch — disable when not needed, enable when ready to predict.

Connect your data in DataLake. Pick a pattern in Pattern Studio. Deploy. The whole loop takes under 2 hours.